Intelligent Retrieval

Retrieval That Thinks Before It Searches

Five layers of intelligence between your question and your answer. Unlike single-strategy systems, Courdx runs semantic search, keyword matching, and knowledge graph traversal simultaneously — then fuses the results into one optimized ranking.

THE FIVE LAYERS

Query Understanding

We Don't Search What You Typed. We Search What You Meant.

Query Decomposition

Example:

"Compare our Q3 revenue to competitors"

What we search:

- 1Our company Q3 revenue figures

- 2Competitor Q3 revenue reports

- 3Revenue gap analysis factors

Predictive Search

Instead of searching for your question, we generate what the perfect answer would look like — then find documents that match THAT. This dramatically improves recall for complex questions.

Query Expansion

Example:

"AR status"

What we search:

- 1Accounts Receivable status

- 2Customer invoice payments

- 3Outstanding balances

Multi-Strategy Retrieval

Three Search Strategies Running Simultaneously. One Intelligent Result.

Meaning-Based Search

Understands the meaning behind your words, not just the words themselves. Finds relevant content even when different terminology is used.

Keyword Matching

Exact term matching for precision when it matters — product codes, legal terms, proper nouns, and specific identifiers. The same approach as traditional search engines, refined for enterprise documents.

Knowledge Graph Traversal

Walks the connections between entities to surface related information that neither meaning-based nor keyword search would find on their own.

Smart Result Fusion

Combines results from all three search strategies into one optimized ranking. Each strategy votes on which documents matter most, and the fusion algorithm merges those votes — so you get the best of meaning, keyword, and graph search in every result.

Intelligent Reranking

Finding Documents Is Easy. Finding RELEVANT Documents Is Hard.

Deep Reranking

A specialized AI model reads each query-document pair together and scores true relevance — not just word similarity, but whether the document actually answers your question.

AI Reranking

For complex queries, an AI model evaluates each result: "Does this actually answer the question?"

Corrective RAG

When Retrieval Fails, We Don't. We Fix It.

Automatic Validation

Every retrieved document graded for relevance. Confidence score calculated before response.

Self-Healing Retrieval

- High confidence → Proceed with answer

- Ambiguous → Refine query, re-retrieve

- Low confidence → Fall back to web search

Guardrails

Protection Built In, Not Bolted On

Input Guardrails

- Prompt injection detection

- PII scrubbing from queries

- Blocked topic enforcement

Output Guardrails

- Hallucination detection

- PII leak prevention

- Citation verification

- Toxicity filtering

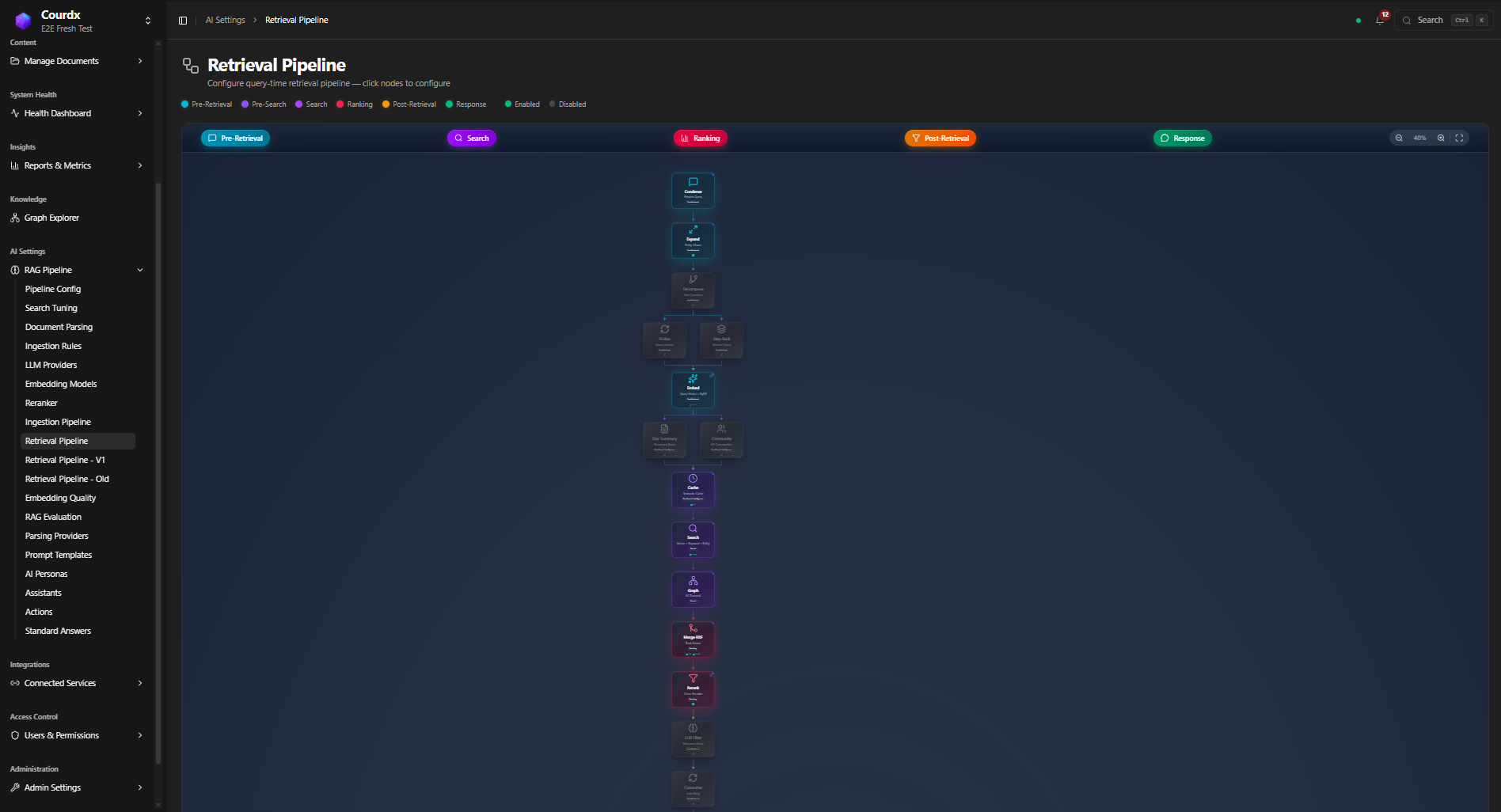

The Full Picture

See the Retrieval Pipeline in Action

Color-coded stages from pre-retrieval through search, ranking, post-retrieval, and response — every step visible and configurable.

GET STARTED

Experience Intelligent Retrieval

See how these five layers work together with your actual documents.